Gamarjoba.

A summer school on advanced programming and contemporary art.

A peer-to-peer assembly for hack and fun.

Pick a full-time course

Check out art electives

Come and play

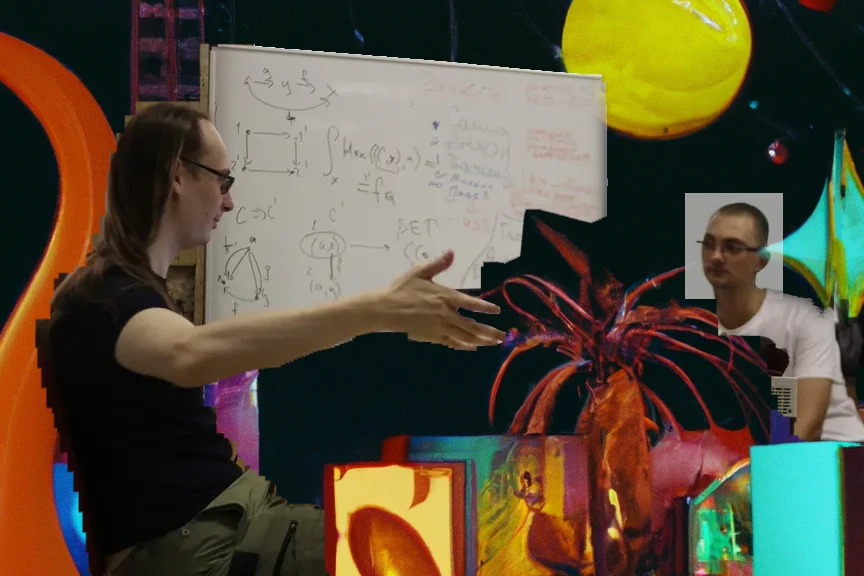

Names & Faces

Courses (tentative)

Machine learning greatest hits

This course fits both R&D researchers from other fields who are curious about what’s happening, but have no interest in distinguishing kittens from dogs using

fit_predict()and true machine learners who feel entrenched in their subdomain (CV, for example) and want to see what’s happening in other areas. (Presenting a cool article is highly encouraged!)The course will be conducted by several seminarists with expertise in their particular fields, and it will work like this: we will read the articles, discuss them together and decipher them until all the aspects that interest us become clear, and weave narratives about where things are going or have gone.

Unlike other courses at the school, which may teach how to do something hands-on through lectures and assignments, this one is aimed more at conceptual understanding (we will be reading and maybe deriving formulas, but we won’t be writing much code) and peer-to-peeredness (if you’re proficient in X — do tell, it’s a seminar).

Purescript

PureScript is a purely functional language borrowing many concepts from Haskell but designed for compiling into JavaScript.

In this course, you’ll get:

- a brief introduction to functional programming

- a bit of personal practice

- a collective exploration of the language — writing our own implementation of the Virtual DOM

The course will be divided into two consecutive parts:

- Fundamentals: an introduction to PureScript and FP. Pure functions, effects, monads, language syntax.

- Practice: hacking VDOM. We’ll take a look inside the Halogen framework. Implementation of our own diff and patch. Tree traversal. Interaction of PureScript with JavaScript.

You can start with the first part to dive in gently, or skip it to plunge into VDOM from the get-go.

Focused Practice on Gate Arrays

Programming is so intricately bound to the semantics of the von Neumann machine that many simply can’t envision an algorithm being implemented by anything other than a chain of instructions, multiple cores with multiple chains, or microcode with signal control generation from a quasi-instruction chain.

In fact, the CPU and all its variants are only a very specific instance of what can be accomplished in hardware. To grasp what can be done in hardware, one must not view it in programming terms (loops, function calls, etc.), but rather through hardware concepts: combinational clouds and state elements, pipelines and queues, clock cycles and within-cycle delays, which determine the clock frequency.

Over the last 30 years, chip designers have not been drawing digital diagrams manually on a screen, but have been synthesizing them with hardware description language (HDL) code, such as Verilog or VHDL. This code may resemble programming code, but it has different semantics, which we’ll explore in this course. Once developed, this code can be synthesized into a physical chip design (how new designs are made) or converted into a logical element graph and flashed into FPGA memory.

FPGA is a chip where transistor connections are programmable.

By flashing it with this code, we get a hardware accelerator that is slower than a specifically crafted, task-focused circuit, but for many tasks, it is faster/more energy-efficient than a conventional CPU with equivalent software. This is how new processors and chips are prototyped, and hardware accelerators are developed.

In addition to simple FPGA labs, we’ll demonstrate how to build a RISC-V processor and use FPGA for sound processing. We’ll also look at the Open Lane ASIC design tool, a free and open-source alternative to commercial packages from Synopsys and Cadence.

Finally, we will connect other school topics such as formal methods and constraint solvers with their use in electronic companies. In these companies, temporal logic proofs are applied for pipeline verification, and constraint solvers are used for generating non-contradictory pseudo-random transactions during functional verification through simulation.

Course Plan by days:

- Combinatorial logic - computations without cycles. Hardware description languages, ASIC and FPGA routes, as well as life in the electronics industry.

- Sequential logic, clock signal, and finite state machines. Application for music recognition.

- The two sides of a processor - architecture and microarchitecture. Analysis of the minimalist schoolRISCV core.

- Unstoppable force: why a specialized ASIC is orders of magnitude faster than a CPU. Pipelines, queues, embedded memory blocks, and clock frequency issues. Applications for GPUs, network chips, and machine learning accelerators.

- Formal verification, using C++ for FPGA project development, and for dessert: an example breakdown of a processor inside an FPGA, connected to VGA and playing Tetris.

Prerequisites: You don’t need any prior knowledge about microelectronics, we’ll explain everything! All you need are programming skills and computer literacy.

Zero-knowledge proofs

ZKP is one of the most exciting and valuable theories behind modern Web3 systems. Over the last 4-5 years, the number of projects using ZKP has dramatically increased, and ZKP approaches evolved so that they could now be used almost everywhere: from creating proofs for assets transferring to solving scalability problems. It makes ZKP a new frontier of Web3 and decentralized systems.

This course aims to show the math behind ZKP and give some practical knowledge. We’ll dive into modern ZKP frameworks, DSLs, lookup arguments techniques, zkEVM, and practical applications.

10 calculi of Isabelle

It’s nowadays fashionable to write about proof assistants based on dependent types. Mainly thanks to Kevin Buzzard’s efforts to popularize the Lean Prover among “pure” mathematicians. This is good, but not good enough, since it overshadows systems based on higher-order logic and simply-typed λ-calculus; systems that have more libraries and industrial use-cases.

While Coq has CompCert to its credit, HOL4 verified the CakeML compiler, and Isabelle/HOL the seL4 microkernel. HOL4 was also used to formalize the semantics of x86, POWER, and ARM instruction sets. Lean 3 is famous for its Mathlib library, which systematically formalizes vast areas of modern mathematics, but in terms of breadth, it still falls short of the Archive of Formal Proofs — a catalogue of libraries for Isabelle/HOL, constantly updated and kept up-to-date.

This bias in popularity is all the more surprising given that dependent type theories are objectively more complex than higher-order logic. Moreover, dependent types are less amenable to automation, and tools like QuickChick and CoqHammer appeared several years after their Isabelle/HOL counterparts — Nitpick and Sledgehammer. The Isabelle/Isar language remains a poster child for writing structured, human-readable proofs.

If you’re already got interested in Isabelle/HOL, then half of this course’s mission is accomplished :)

The second half is to get intimately acquainted with the tools of formal language theory: calculi, their semantics, and meta-theorems.

To do this, we will start with an introduction to programming and proving properties of programs in Isabelle/HOL, and then move on to a leisurely examination of the paper “A Verified Decision Procedure for Orders in Isabelle/HOL”. The paper gradually builds and verifies increasingly complex languages (calculi) for expressing judgments about the order relation between objects (something is greater than something else, but less than or equal to a third thing). The examples are simple, but not toy ones — ultimately, the verified decision procedure for order theory was incorporated into Isabelle/HOL itself, and now we can use it to automate proofs.

If the time permits, we’ll get acquainted with relational semantics, a (homebrew) proof systems and their proof terms, and the refinement of declarative specifications into executable ones.

SAT / SMT

SAT/SMT solvers are heuristic engines for solving NP-complete problems in fields ranging from software and hardware verification to cryptography and bioinformatics. This course is about how they work inside: how to practically solve NP-complete problems.

From the solver’s point of view, the task is to solve equations in a particular logic or theory. For example, in propositional logic, where only boolean variables and standard logical operators “and”, “or”, “not” and “implies” are used. Or in the logic of linear arithmetic, which includes logical operators, as well as arithmetic operations “+” and “multiplication by a constant”. Using equations from a specific theory or their combination, one can attempt to crack a cipher or check the logic of a program for correctness.

The first problem that solvers started to solve is SAT, that is, the problem of determining the satisfiability of Boolean formulas. According to Cook-Levin theorem, this problem is NP-complete and most likely does not have a polynomial algorithm for its solution. However, this is not so bad: first, there are many heuristics and algorithms that speed up the brute-force solution of SAT tasks, and second, many theories are simply undecidable, that is, there is no algorithm that could find a solution in finite time.

In this course we will:

- Explore the foundation of any solver - the algorithms of Conflict-Driven Clause Learning (CDCL) and Davis-Putnam-Logemann-Loveland (DPLL). The CDCL algorithm solves the SAT problem, and DPLL allows you to easily attach a resolution procedure to it, that is, an algorithm that solves the equation in a specific theory.

- Consider various theories (linear arithmetic, bit vectors, arrays, pointers) and their decidability. If a theory is undecidable, we will try to find out the conditions under which it becomes decidable, and also study the corresponding resolution procedures.

- Look at applications in operations research, cryptography and verification.

Modern Lisps

Tired of waiting for the whole project to recompile just to test a minor change? Do you have an itch caused by the lack of a particular feature in your programming language? Have you always wanted to explore the magical world of Lisp but didn’t know which dialect to pick or where to start? This course has answers to those questions and almost a decade of professional Lisp experience to share with you.

Functional programming is no surprise nowadays, but combining it with REPL and TDD to execute code, check it, and see the results as you type is not that common. All our practice will be highly interactive, programs are easily runtime-introspectable, and feedback loops are as short as possible.

In addition to REPLs, Lisps are also famous for their homoiconicity and macros, and during the course, we will do a lot of meta-programming: from adding missing language features to creating data-driven eDSLs and interpreters for them. We will embed one Lisp dialect into another, launch the same code in different runtimes, and do markup, styling, database querying, data transformation, all in a single language.

Of course, we will cover a brief history of Lisp language family, take a look at the basic building blocks, elegance and simplicity of the syntax, learn about what is so special about Lisp macros, discuss primary differences of various dialects, and try to understand which option is right for which task.

After that, we will go all the way down with Lisp: from configuring a text editor in Elisp and building a web application in Clojure(Script) to describing the whole operating system and deploying it to the server using Guile Scheme and Guix.

Static analysis

Static analyzers automatically check the properties of programs that interest us, without executing them. Typically, they are used to search for errors and vulnerabilities in programs, to improve their efficiency through optimizations, to help understand programs, and to perform refactoring.

We will take a small model imperative programming language based on Lua. Then we will implement an interpreter for it, and then a data flow analyzer using various abstract domains as examples. All of this we will do in the functional programming language OCaml or its dialect - Reason. Along the way, we will study all the necessary theory, as well as discuss practical details of implementation, in other words, how to write analyzer code succinctly, beautifully, and expansively.

The topics from a theoretical standpoint that we will cover:

- Basic concepts of static analysis

- Why creating a perfect static analyzer is actually impossible, but we are not giving up

- Syntax and semantics of programs

- Abstract interpretation

- Lattices, fixed point search algorithms, and their efficiency

- Monotonic frameworks for data flow analysis

Prerequisites:

What you don’t necessarily need to know:

- Static analysis (it’s a course on static analysis)

- Monads, lattices (we’ll cover), and other jargon

- OCaml / Reason, Haskell, and other languages in the ML family (we’ll learn)

It will be useful to have some basic experience with functional-style programming: be familiar with map/filter/fold.

A typical day goes like

| 9:30 | Wake up |

| 9:45 | Morning yoga |

| 10:30 | Breakfast |

| 11:00 | Seminar |

| 12:15 | Break |

| 12:30 | Lecture |

| 13:45 | Dinner |

| 14:30 | Dance class |

| 15:45 | Break |

| 16:00 | Lecture |

| 17:15 | Snack time |

| 17:30 | Seminar |

| 18:45 | Break |

| 19:00 | Mountain biking |

| 20:15 | Supper |

| 21:00 | Nighttime walk |

| 23:00 | Prepare for sleep |

| 0:00 | Zzz... |

Art activities

Venue and accomodation

The school's hosted at Hotels & Preference Hualing Tbilisi, a 5-star venue near Tbilisi Sea. The sea in question is a three minutes walk away.

The drive from Tbilisi airport takes 20 minutes, while a shuttle bus from Yerevan will get you there in about five hours.

Check out the venue presentation↗

Check out the venue presentation↗

Participation cost

The cost covers:

- Accomodation for 9 days and 8 nights

- Pool/sauna/fitness area access

- Three buffet meals + an afternoon snack a day

- A 24/7 snack station

- Participation in all school events

- and some swag

The only thing you have to fix yourself is getting to the venue. We’ll help folks self-organize transfers from Vladikavkaz, Batumi or Yerevan. Once in Tbilisi, just hail a taxi.

You can share a room with another participant or book a single room: that’s what xxxx/yyyy$ stand for. You can also get a fancy room with jacuzzi and sponsor grants for those who need them with a sponsor’s ticket.

If you already live in Tbilisi and are comfortable with the commute, you can opt out of hotel accomodation.

We kindly ask corporate participants to pay more than the regular ticket. This helps us make the school more accessible for other participants.

Select your participation type to see your ticket price:

Cost breakdown

Budget (excluding accomodation)

| Revenue | loading... |

| Expenses | loading... |

| Profit | loading... |